I’m going to tell you something most AI vendors would rather you didn’t hear: 85% of AI projects fail to meet expectations. And it’s almost never because of the algorithm.

It’s the data. It’s always the data.

I’ve spent years watching facilities teams get sold on AI promises — predictive maintenance, energy savings, smarter capital planning — only to watch those projects stall or fail. Not because the technology doesn’t work, but because nobody told them the unsexy truth first: every AI vendor has a good algorithm. Your competitive advantage is data quality, and most FM organizations don’t have it.

Here’s how to know if yours is AI-ready, and what to do if it’s not.

Three Red Flags Your Data Isn't AI-Ready

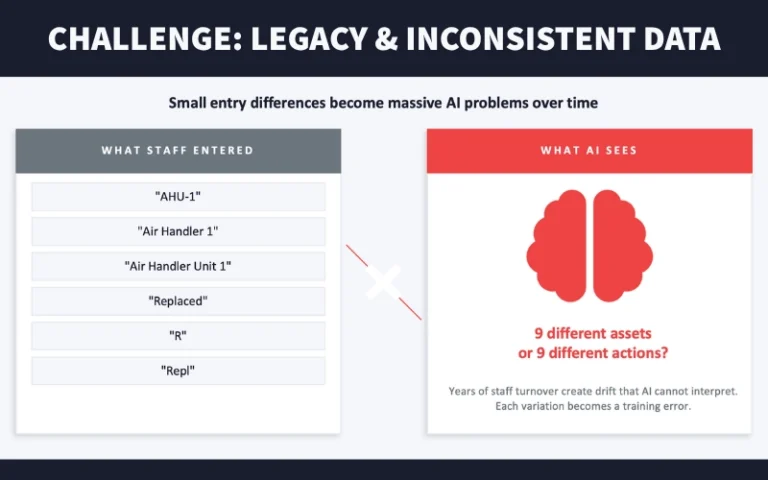

1. Multiple Naming Variants Per Asset Type

Pull up your CMMS right now. Search for air handling units. How many ways did your team enter “AHU-1”? If you’re seeing AHU-1, Air Handler 1, Air Handler Unit 1, and AHU #1 — that’s four different assets to AI. Years of staff turnover create drift that algorithms can’t interpret. Each variation becomes a training error. When I see more than three naming variants per asset category, I know AI deployment needs to wait.

2. Asset Information Completeness Below 70%

Missing install dates? No nameplate data? Below 70% completeness means AI fills gaps with assumptions — and wrong guesses compound into systematically bad recommendations.

3. Systems That Allow Empty Required Fields

If your CMMS lets technicians skip required fields, they will. Lock down your schema. Required means required.

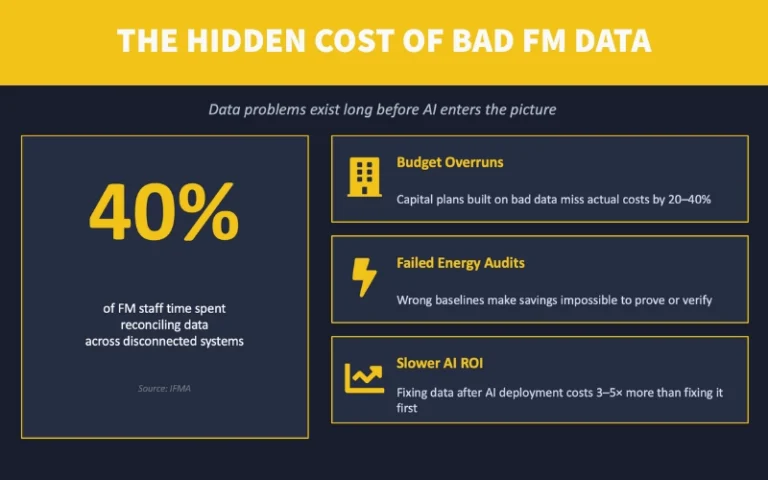

The Cost You're Already Paying for Incomplete Facilities Data

Here’s what frustrates me: teams act like data quality is an AI problem. It’s not. It’s costing you right now. FM teams burn hours every week reconciling data across disconnected systems — time that could go toward actual maintenance. Capital plans built on incomplete data routinely miss actual costs by double digits. And just like fixing software bugs after release costs 3-5x more than catching them in development, the same principle applies to AI data quality.

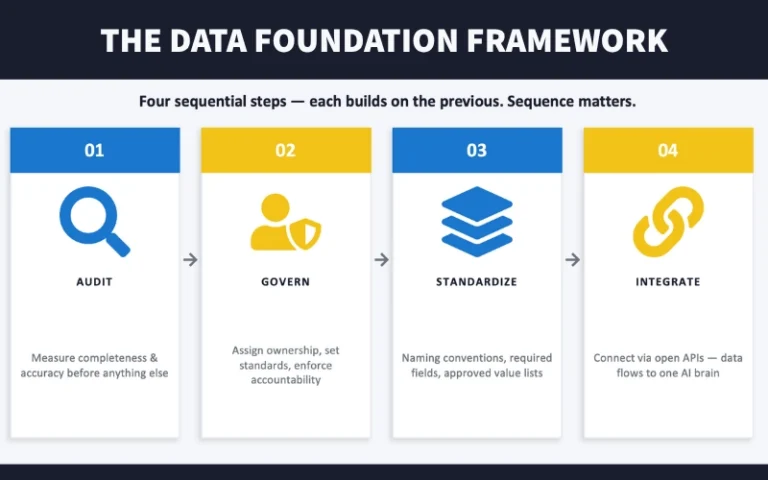

The Fix: Audit → Govern → Standardize → Integrate

We suggest this framework with every organization we work with. The sequence matters.

- Audit: Measure completeness, accuracy, and consistency before anything else. Target 90%+ field completion; manually verify 100 random assets against reality.

- Govern: Assign data stewards by domain. Make data quality a KPI. If nobody owns it, nobody fixes it.

- Standardize: Dropdowns instead of free text. Approved value lists. One classification standard (UNIFORMAT, OmniClass, or CSI) — enforced everywhere.

- Integrate: Require open APIs in all future procurement. Connect CMMS to BMS. Build toward a unified master record for each asset.

Proof the Data Foundation Approach Works

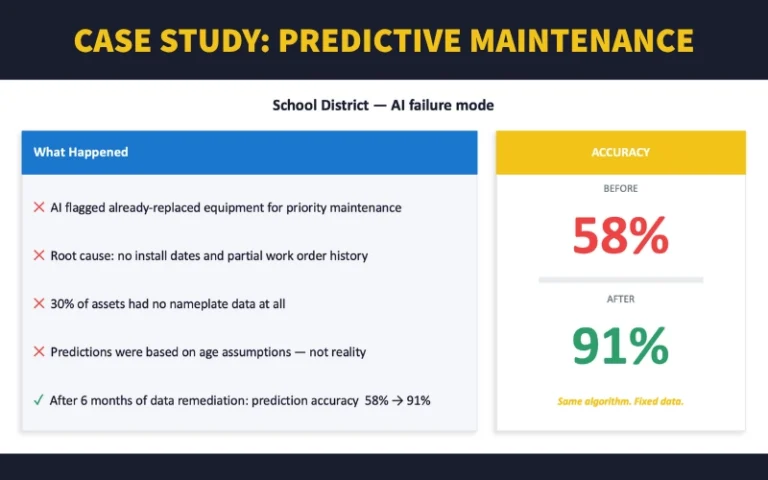

A school district that learned this the hard way. They deployed predictive maintenance AI. It flagged already-replaced equipment for priority work — predictions based on assumed ages, not reality. After six months of data remediation (not algorithm changes), accuracy jumped from 58% to 91%.

Same algorithm. Fixed data.

Now for the Shameless Plug

I co-founded AkitaBox because I kept seeing building owners struggling with their data and lack of it. We help facilities teams get their data right — from field capture with AkitaBox Capture, to maintaining integrity over time, to using that data for capital planning and maintenance optimization.

AkitaBox has been implemented in over a half billion square feet. Because we know that even the best AI is only as good as the data feeding it — and we built our platform to make sure that foundation is solid from day one.

Want to talk data strategy?